5 predictions about agentic AI and analytics in 2026

What AI trends will shape analytics in the coming months?

Enterprise-grade AI problems require enterprise-grade streaming solutions

Unless you’ve been living under a rock, you’ve noticed how flooded the consumer AI market is and how successful those products already are. But on the flip side, the enterprise agentic AI market is struggling. Companies are still trying to figure out how to run agentic systems safely in their private networks using their private data.

During a recent talk at Dragonfly’s Modern Data Infrastructure Summit, Redpanda CTO Tyler Akidau discussed the challenges of deploying agentic AI—and how streaming has already solved many of the same problems around infrastructure and data movement.

You can watch the full talk on YouTube for free: Deploying Agentic AI Scalably and Safely in the Modern Enterprise. We’ve also compiled Tyler’s key points in this blog and will walk you through the specific ways in which streaming platforms can help you safely and reliably scale your agentic systems.

Anthropic defines agentic AI as two different types of systems:

Tyler thinks about agentic AI a little differently, defining an agent simply as artificial intelligence that is performing work, with less concern about whether that agent is part of a workflow or fully autonomous.

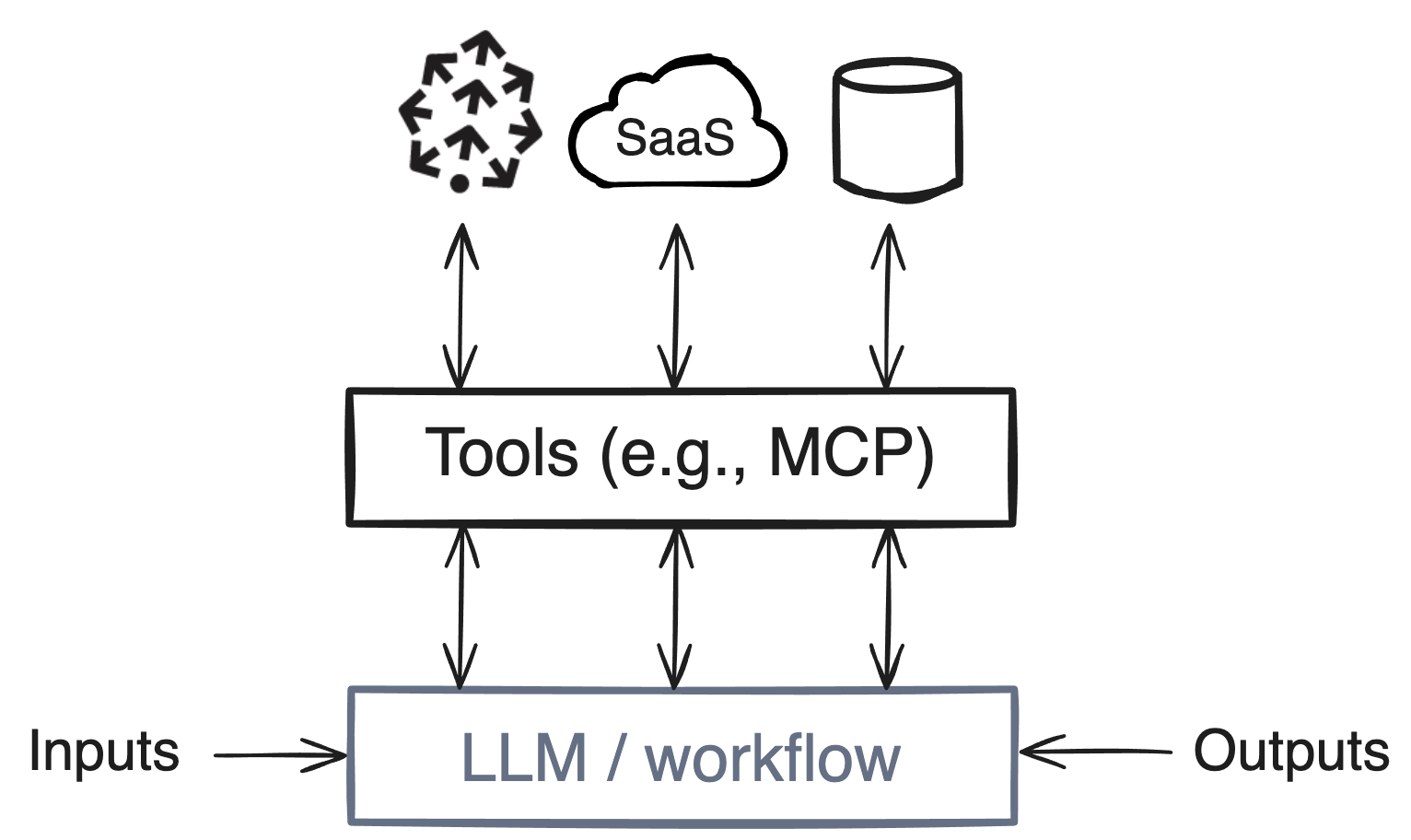

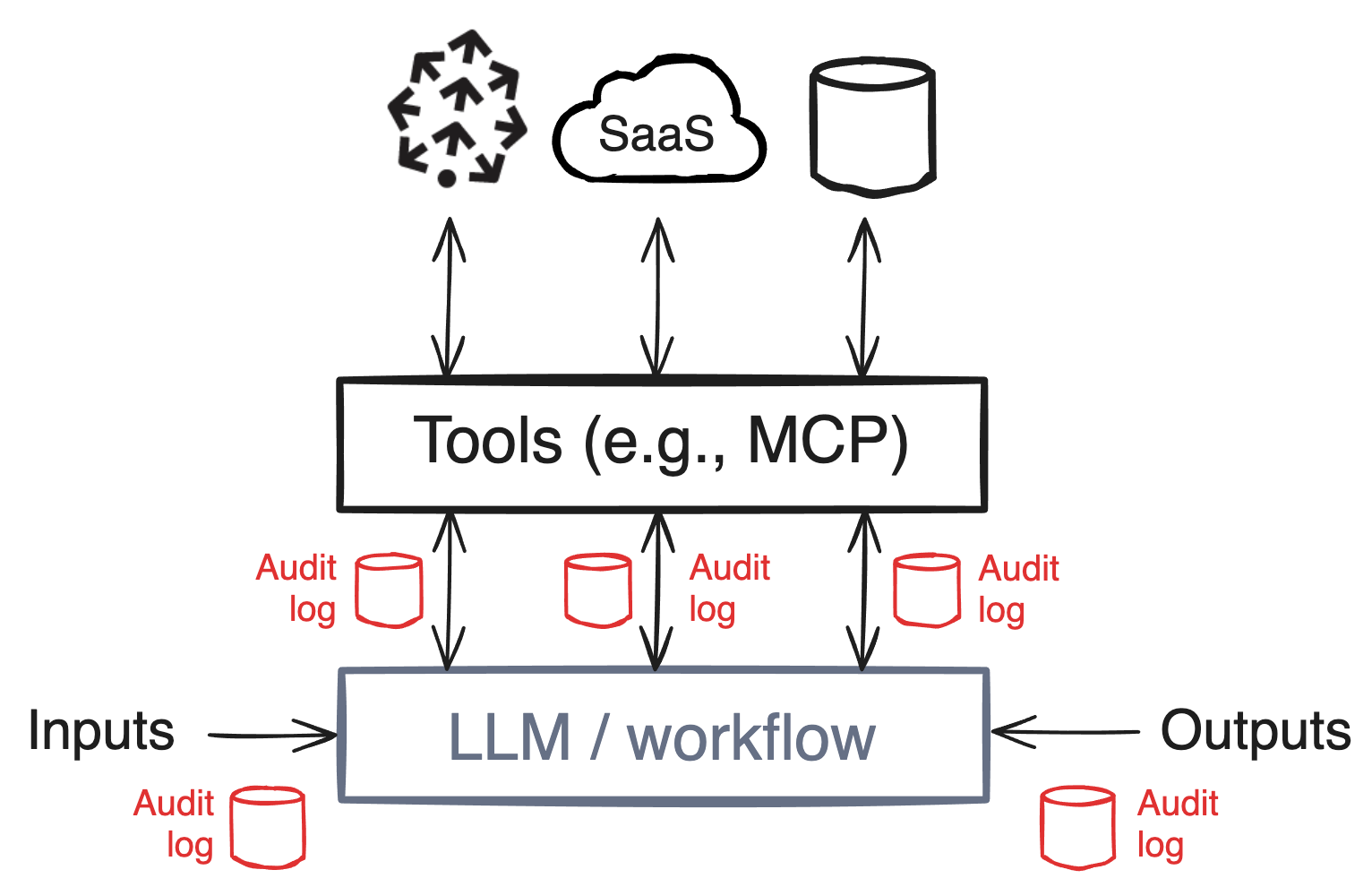

In the simplest terms, an agent is artificial intelligence that receives input (such as from a SaaS platform or database), interacts with tools (such as the Model Context Protocol, or MCP), and produces output. Here’s a simplified anatomy of an agent:

Does that diagram look familiar? At a high level, an agent functions similarly to how a streaming platform operates. That’s where the opportunity arises to address enterprise challenges around deploying agentic AI with—you guessed it—streaming platforms.

If you’d like to learn more about the basics of agentic AI, check out our introduction to autonomous agents.

In the not-so-distant future, AI will likely mediate every business interaction in some way. At this point it’s not a question of if, but rather how to get there without compromising your company’s safety and stability.

Many of the challenges around deploying and scaling agentic AI echo the streaming problems we thought we solved a decade ago. To help you better understand why those challenges exist, let’s take a brief detour and discuss a framework for thinking about the limitations of a plug-and-play AI agent.

If you’re familiar with Dungeons & Dragons, the game includes an alignment chart that helps frame the behavioral character traits. In essence, the chart pinpoints which characters are rule-followers (lawful) and which do not follow an established or consistent set of rules (chaotic)—layering those traits over whether a character is selfless or selfish (i.e., good or evil).

We can also apply the chart to business. When your company hires human workers, you do your due diligence to ensure those workers will operate within the top-left quadrant of the chart (as individuals who will do their best to follow the rules and act with good intentions).

Agentic AI, on the other hand, mostly falls into the right column of the chart. Despite the guardrails and training that companies attempt to put AI through, the best outcome at this point is that of “chaotic good”—because you don’t know what you don’t know. Without the ability to govern or audit an agent, you can’t confirm the agent is doing exactly what it’s supposed to do (and only what it’s supposed to do).

Meanwhile, you’re expected to plug agents into your private network with your sensitive company data and give them access to the internet as well. What could possibly go wrong?

If you want to deploy agentic AI safely and effectively, you need to prioritize several non-trivial moving pieces:

Most of these challenges are data streaming problems (aside from context querying and authentication).

So how can streaming solve these problems and give companies the peace of mind needed to safely deploy AI? Let’s walk through them one by one and highlight where and how streaming can help.

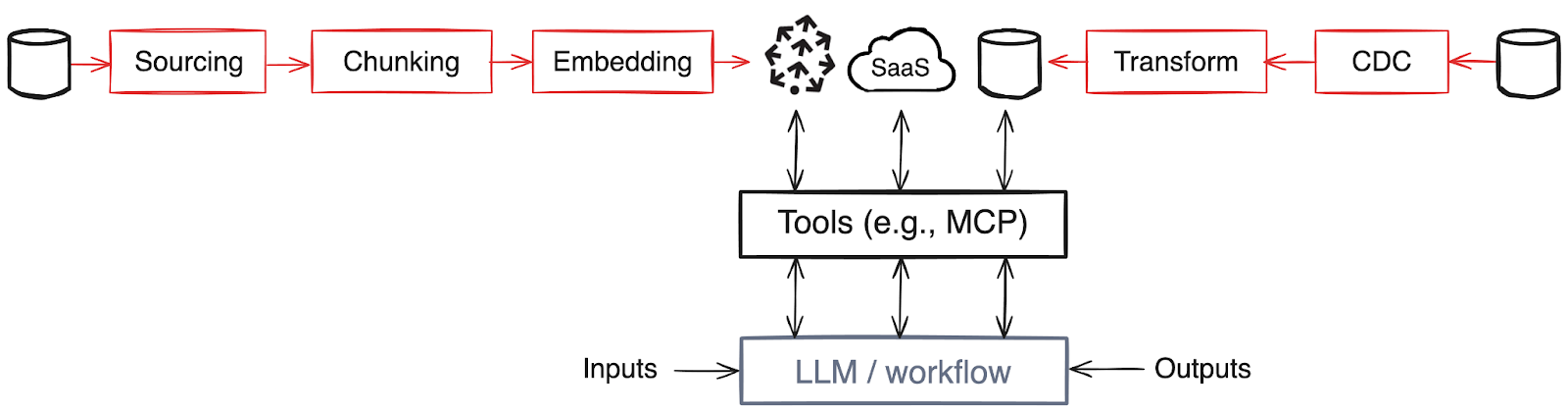

Building and maintaining data for your agent is a classic streaming Extract, Transform, Load (ETL) use case. You want to create datasets that are useful for your agents, whether you’re building a knowledge base that connects to a vector database like Pinecone or performing change data capture (CDC) and pulling that data into an Online Analytical Processing (OLAP) database for analytical queries.

The more you focus on keeping your data up to date, the more effective the agents will be. Streaming is an important solution here because you don’t want agents who lack context and aren’t as good at reasoning.

Governance can mean many things, and it doesn't have to be a streaming problem. But when you’re building agentic systems that span datasets and sources, you need the ability to configure and enforce consistent behaviors among those agents.

Enforcement at each data source is virtually impossible when your architecture includes datasets, vector and OLAP databases, SaaS tools, Kafka Streams, and beyond. To effectively govern a fleet of agents, focus on the interconnection points.

Streaming excels at data movement. It allows you to configure Agent X’s access to read and write for data types A and B, and those rules will apply any time Agent X encounters data types A and B (and Agent X won’t do anything when it encounters data type C).

Enforcement in a single agentic data plane brings uniformity to the governance of technological sprawl. With streaming, you can enforce service-level objectives for latency, accuracy, and cost—and turn opaque agent behavior into governed workflows.

If you think back to the D&D alignment chart, you have these chaotic agents with internal logic that remains unknown. To understand what an agent is doing in response to a given input, you have to record all inputs and outputs.

Historically, we’ve chosen the more cost-effective option for auditing: logging metadata requests rather than entire bytes of data (i.e., User Y read Z number of bytes on such-and-such day). But with agents you need to be able to audit what the request was, and what the agent did in response to the request. You can’t make inferences without having the full dataset.

This is again where streaming comes into the picture: streaming systems are good at high throughput, low latency, and durable logs.

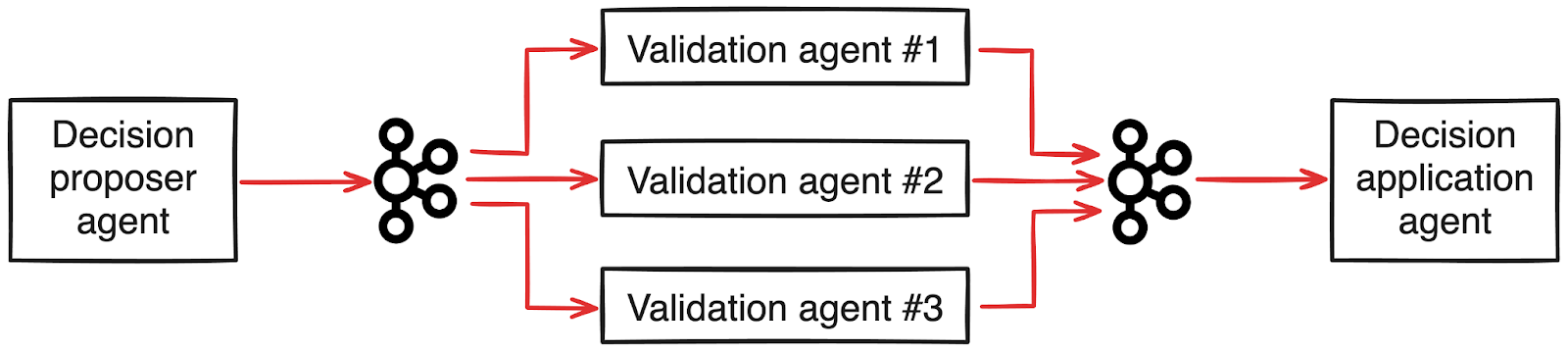

Validating agent behavior is a classic streaming replay scenario. Audit logs can perform double duty to help you review and confirm whether the agent in question is actually doing the job you asked it to. You can record the agent’s inputs and outputs, then reassess.

{{featured-podcast="/components"}}

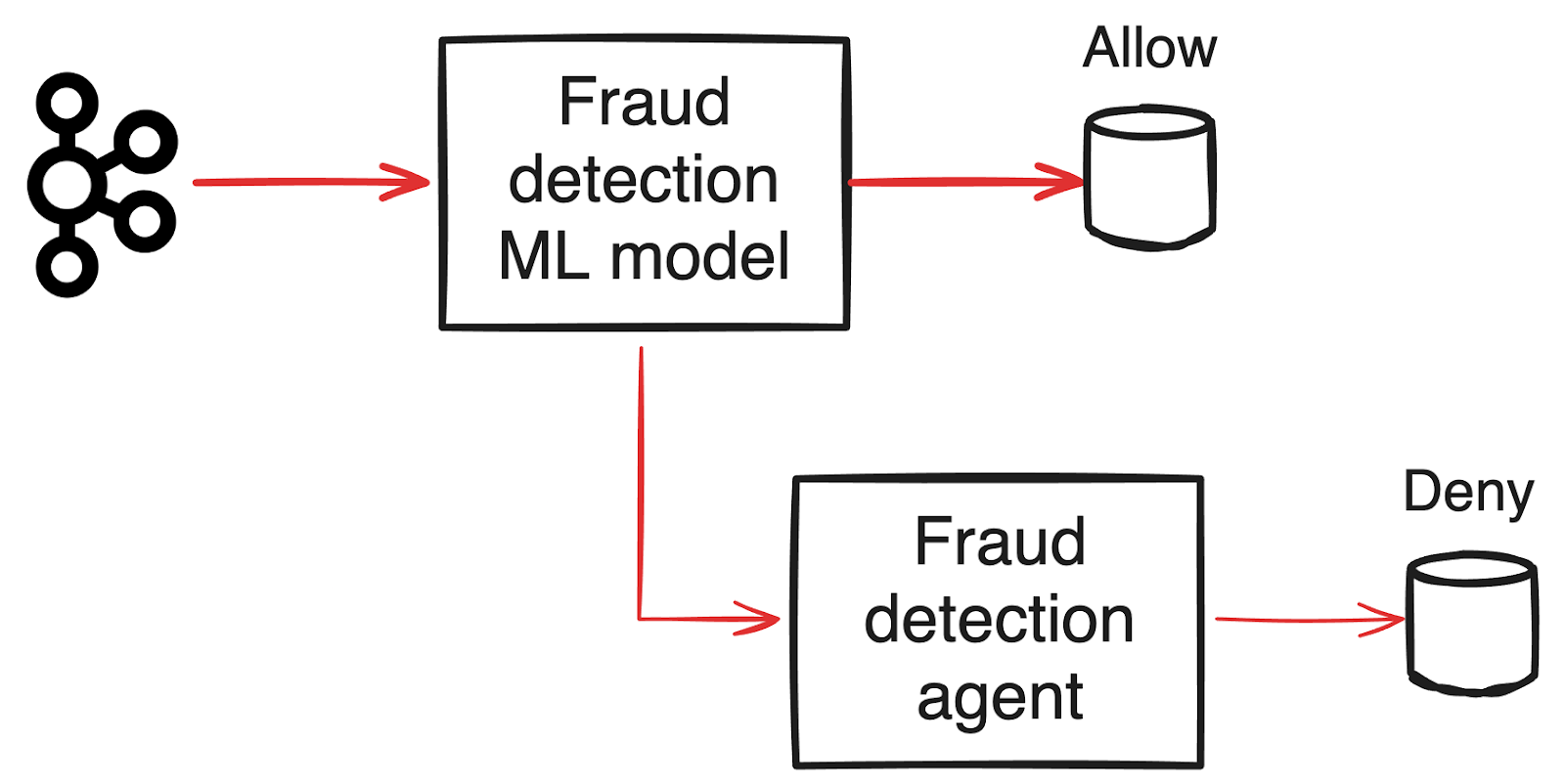

LLMs have their uses, but they’re not a fit for every problem—because they’re also expensive, require a lot of compute, and aren’t very fast. This is where dynamic routing becomes beneficial. You can use AI when it makes sense and continue to rely on your other systems when it doesn’t.

Take fraud detection, for example. Machine learning (ML) models and heuristics are cheaper and make more sense to scan most of your data (since fraud will likely only make up a small percentage). Once those systems identify an anomaly, a trained fraud detection agent can help you investigate further.

If you’re building on a streaming architecture, you get this ability to filter or route subsets of data to an agent.

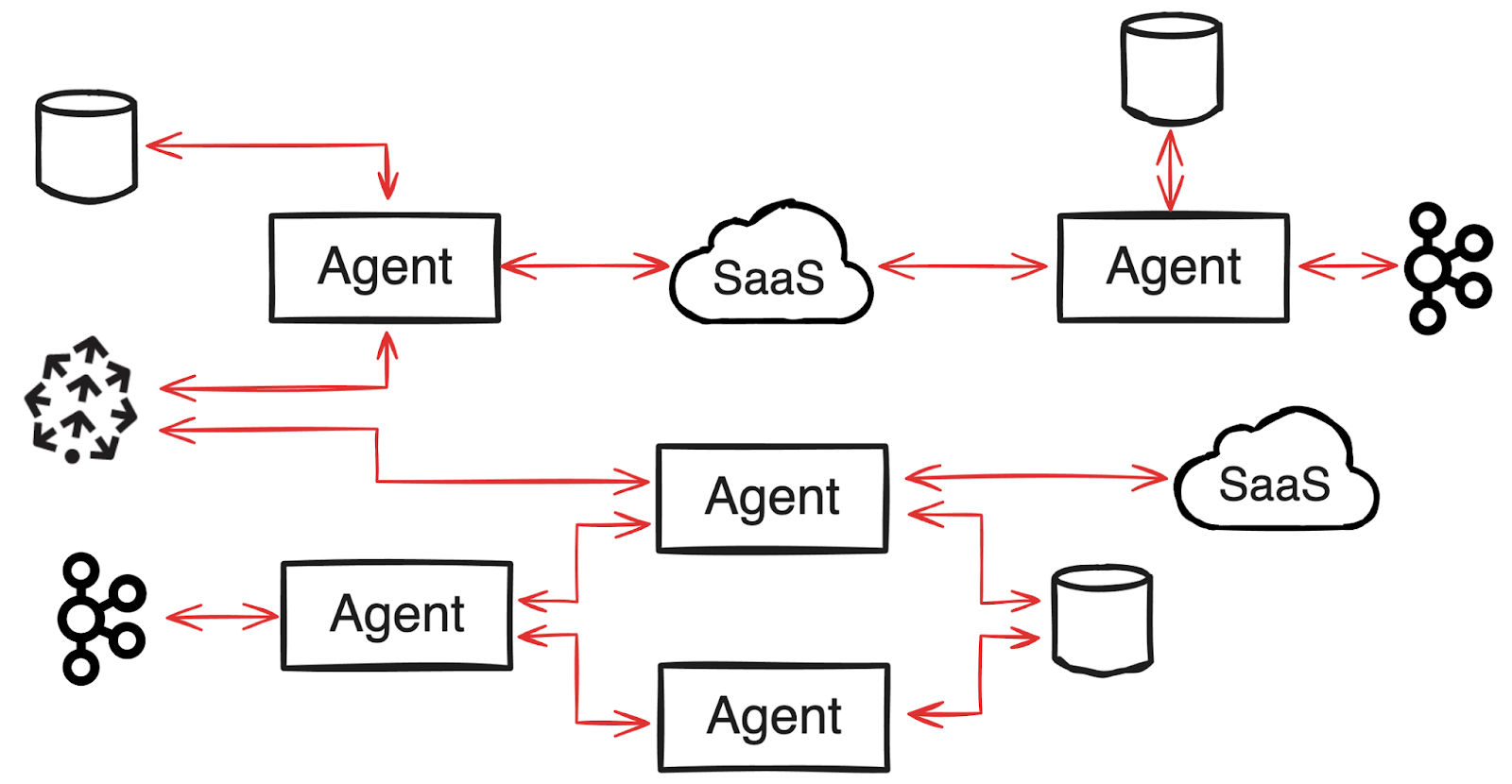

Multi-agent coordination seems like another classic streaming use case. If you think about the microservices architecture, you get benefits like decoupled services, durability, and fan-in and fan-out inputs. Multi-agent scenarios also require scalable, decoupled communication.

With streaming, you get easier maintenance and better durability for your multi-agent system.

After several decades of evolution across distributed systems, we already have the building blocks to scale agentic AI safely—we just need to apply them. While you might be surprised to see streaming as a key piece of the agentic data plane, it starts to make a lot of sense once you break down the actual problems around infrastructure and data movement. Watch Tyler’s full talk from MDI Summit to dig in further.

Just keep in mind that while streaming can help solve a lot of agentic AI challenges, it’s not your answer for everything. You still need authN/authZ, a multi-modal catalog of contextual data (not just streaming data), querying, and a durable execution for workflows, among other things.

Wondering how to start taming the AI chaos and set up your team to work 10x better? Redpanda recently launched the Agentic Data Plane: a managed, governed data control plane that provides the missing layer companies need to safely and reliably integrate agentic AI. If you’re curious, get in touch to learn more.

What AI trends will shape analytics in the coming months?

How we turned opaque agent behavior into governed, provable workflows

How we revamped our Redleader agent to enable governed, multi-agent AI for the enterprise

Subscribe to our VIP (very important panda) mailing list to pounce on the latest blogs, surprise announcements, and community events!

Opt out anytime.