Five principles for governed autonomy with enterprise AI

How we turned opaque agent behavior into governed, provable workflows

How a governed data control plane ensures trust and accountability

Deploying agentic AI requires trust-fall levels of confidence, which is why so many businesses are hesitant to start. You need to know that agents are doing what they’re supposed to be doing (and not doing what they shouldn’t be).

So how do you get to that point where you feel comfortable taking the leap? It’s a bit like the chicken-and-the-egg paradox: you have to start somewhere to get started. But you also need guardrails. A platform like Redpanda’s Agentic Data Plane (ADP) can help you safely test and scale agentic systems while offering governance, auditing, and observability.

In a recent Tech Talk, Redpanda Solutions Engineer Garrett Raska walked through how to get started with agentic AI, how to choose your first use case, and why a data streaming company is uniquely suited to help.

You can watch the Tech Talk, or read on for a summary of his key takeaways.

{{featured-event}}

Data streaming and agentic systems have significant overlap in their requirements. Much like agentic systems, data streaming focuses on an input-output flow of data. Likewise, streaming is:

Second, data streaming and agentic systems face many of the same challenges, including:

For a deeper dive into common challenges across data streaming and agentic systems, check out our blog on how data streaming platforms can help you scale agentic AI.

If you’re just getting started with agentic systems or still finding your footing, you probably find the idea of trusting agents difficult without proper visibility or governance. That’s completely understandable.

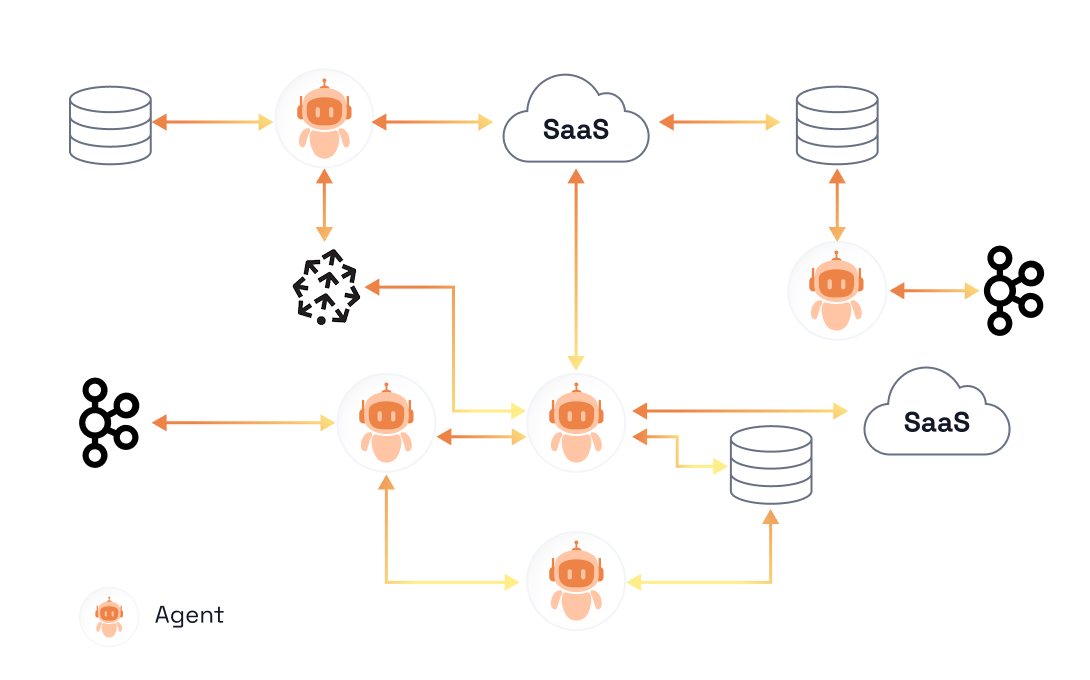

Agents need extensive context and data access to operate effectively. They require access to different SaaS platforms and databases, LLMs, Model Context Protocol (MCP) tools, and even other agents—basically the same level of data access a developer would need. Agents are also incredibly chatty, constantly making calls to these various tools to do their work.

To mitigate potential trust issues, you need a way to configure and enforce consistent behaviors. But it would be frustratingly complex to govern those agents at every access point.

Redpanda developed Agentic Data Plane as a data control plane to help you get started with agentic AI—one that will allow you to ramp up quickly once you graduate from testing to deploying agents in production environments.

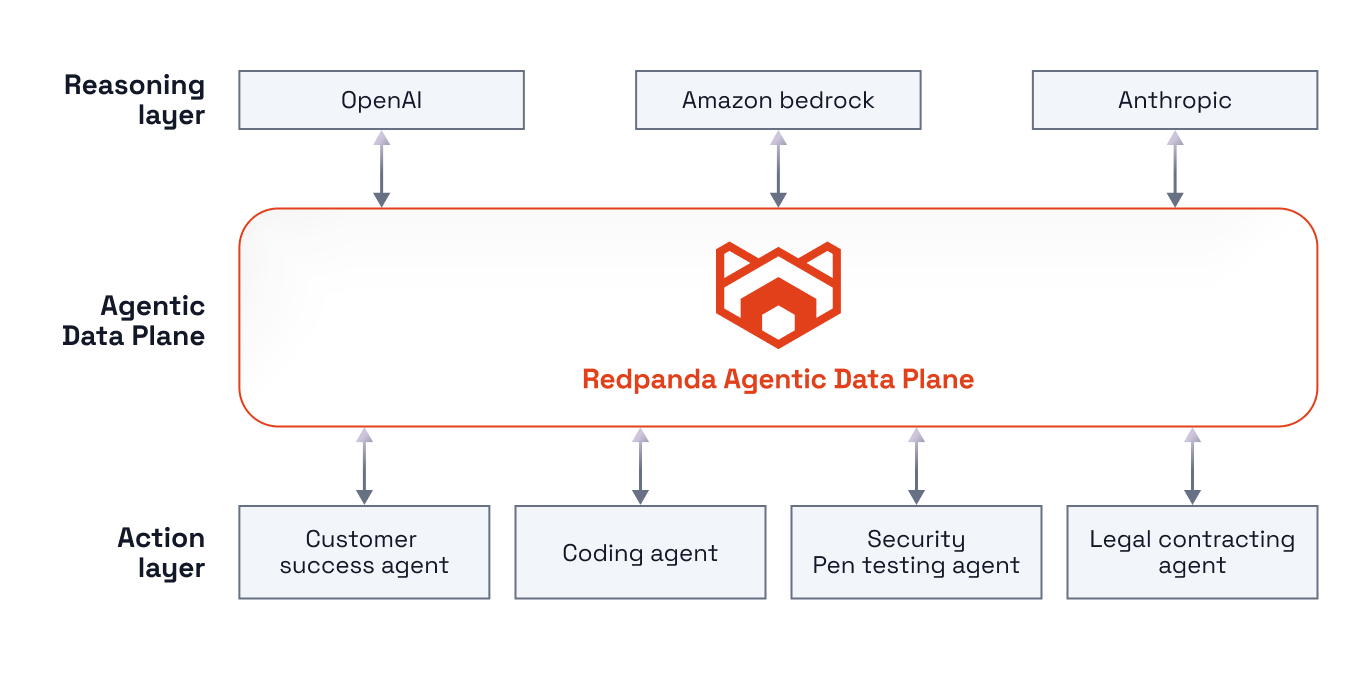

With ADP, you get the tools to build and operate large-scale agentic systems, along with the context and governance for peace of mind. There are three key layers for managing agentic systems at scale: the reasoning layer, the Agentic Data Plane, and the action layer.

In summary, with Redpanda ADP, you can oversee agents whenever they intersect with other tools, databases, and agents. ADP logs all agent activities—every prompt, input, context retrieval, tool call, output, and action—and gives you the ability to audit and trace those activities in a parsable execution log. That means you can easily:

Once you have a plan or tool to oversee your agentic system, it’s time to consider a few practical steps before moving into the testing or building phase.

Consider how you’ll deploy the agent: where and how are you going to run the service?

Whether you’re using Kubernetes or an on-premise resource (or something else), you need to set up secure accounts within AWS (or your preferred cloud infrastructure provider) to deploy and run your agent. Even if you plan to test a use case with staging data, you’ll still need to connect to your source and sink systems. Configuring this setup early on will be helpful once you start scaling.

You can also use a data streaming platform like Redpanda, which includes an orchestration layer that will provision all required services on your behalf.

In an enterprise setting, you’ll need to coordinate with several other teams to secure permissions for different data sources. This might involve speaking to your infrastructure team, database administrators, and even your security personnel to understand what’s required.

Start these conversations as soon as possible. You don’t want to be in a position where you’ve built an agent that's producing output but have no way to productionize the setup.

With agentic AI, many companies want to plug agents into the most ambiguous scenarios to solve the problems humans couldn’t. The challenge with this approach is that if you don’t already know the solution, you can’t really determine whether the agent is doing its job.

Instead, we recommend picking a well-understood problem, particularly when you’re just getting started. Build an agent that replicates a process where you have defined steps, a discrete pattern, and the ability to test at different intersections. Then you’ll have clear data to measure agent performance and understand where and how an agentic system will fit in.

If you try to tackle an unknown problem space, you’ll spend considerable time developing the steps and testing process—time you could have spent collecting data and refining your agent (or building more agents).

One of the most common questions we hear from companies curious about agentic systems is, “I see the potential, but what are some specific use cases?”

Let’s take a quick look at some of the common low-latency verticals where AI adoption is moving quickly:

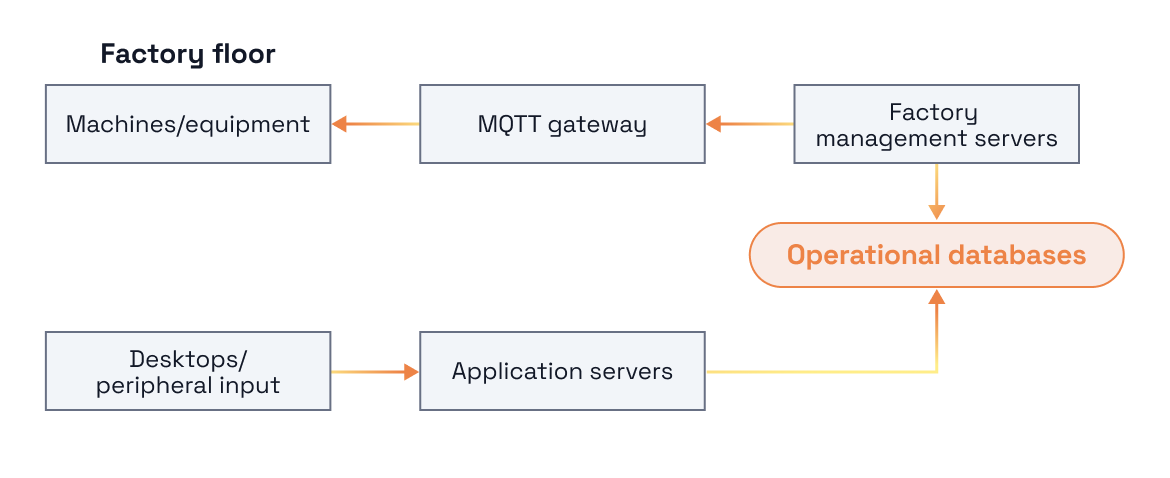

Take manufacturing, for example. There are two use cases where you can quickly deploy and start to work with agents:

To get the full scoop on safely deploying agentic systems, watch the Tech Talk.

If you’re itching to get started with ADP, chat with us to learn how we can help you safely deploy, govern, and scale agentic systems across your data landscape. Get in touch!

How we turned opaque agent behavior into governed, provable workflows

How we revamped our Redleader agent to enable governed, multi-agent AI for the enterprise

Learn from the leaders actually shipping and scaling AI agents today

Subscribe to our VIP (very important panda) mailing list to pounce on the latest blogs, surprise announcements, and community events!

Opt out anytime.