OpenClaw is not for the enterprise

Great on a dev machine. Ungovernable at scale.

Benchmark shows Vera provides 5.5x lower latencies and up to 73% higher throughputs than other leading CPU models

"Redpanda recently tested NVIDIA Vera running Kafka-compatible workloads and saw dramatically better performance than other systems we’ve benchmarked, delivering up to 5.5x lower latency. Vera represents a new direction in CPU architecture, with more memory and less overhead per core, enabling our customers to scale real-time streaming workloads further than ever and unlock new AI and agentic applications." — Alex Gallego, Redpanda CEO and Founder.

From cybersecurity and financial services to social media and entertainment, nearly every major industry is racing to harness the power of agentic AI. That means data-intensive applications must be deployed as close to inference engines as possible.

Redpanda has a proven track record of delivering mission-critical infrastructure for applications that drive enterprise growth. When deployed on NVIDIA Vera, demanding enterprise customers get rock-solid infrastructure software on world-class silicon—and we ran a benchmark to prove it.

NVIDIA Vera is the new high-performance CPU based on the NVIDIA-designed Olympus core, optimized to support the CPU-intensive demands of reinforcement learning, agentic AI, and data processing at data center scale. Vera is a key component of the NVIDIA Vera Rubin platform and is also available as a standalone CPU for hyperscale cloud, analytics, HPC, storage, and enterprise workloads.

In our benchmark, we compared Vera against five other systems and found that Vera delivered the lowest streaming latencies across the board, the best interconnect scaling, and the fastest build times with up to 73% higher throughput than AMD EPYC “Turin.”

Read on for the benchmark breakdown.

First, some context:

Redpanda Streaming is known for being a blazing-fast, scalable data streaming system compatible with Apache Kafka®. Built on a shard-per-core, shared-nothing architecture, Redpanda Streaming is an extremely efficient engine designed to take full advantage of CPU-based architectures, delivering the highest throughputs with the lowest latencies.

Now with Redpanda SQL (formerly Oxla), we also have a fast, scalable distributed SQL engine that efficiently harnesses CPU cycles for maximum performance.

For the benchmark we ran three tests where we provisioned Redpanda on server configurations with different CPU types: NVIDIA Vera, AMD EPYC “Turin,” AMD EPYC “Genoa,” and Intel Xeon 6 “Granite Rapids.”

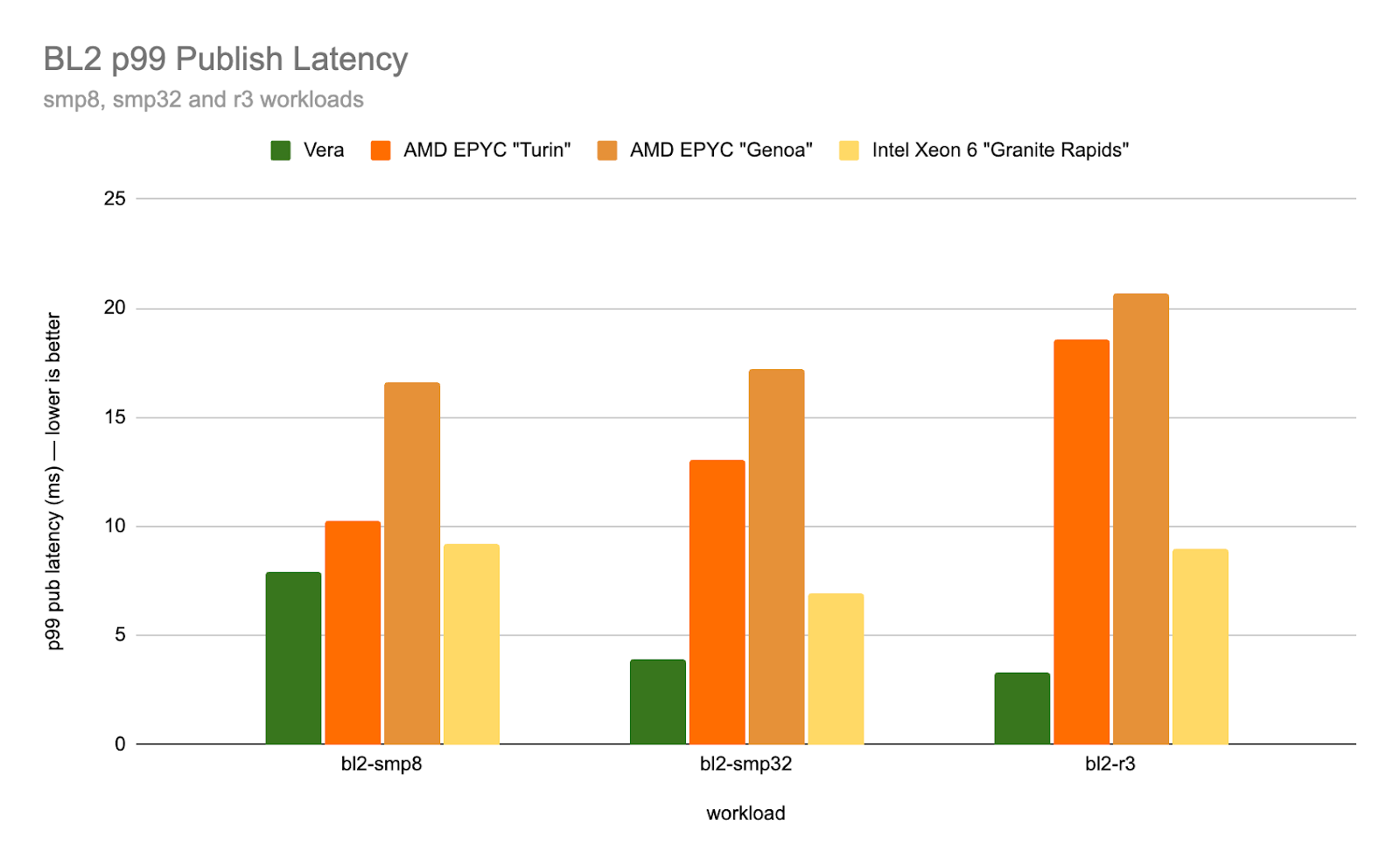

For each of the CPU types in this first test, we provisioned Redpanda in different configurations:

It’s worth highlighting that while other architectures mostly increase latency or see modest decreases as cores and machines scale, Vera significantly decreases latency with scale.

This test specifically examines long-tail latencies, known as P99 latencies—the longest response time for the fastest 99% of operations. This number is especially important because it identifies performance bottlenecks, like lags and spikes. Having a low P99 means that even under high load, the vast majority of operations will meet Service Level Agreements (SLAs).

Redpanda found that in the cluster configuration, NVIDIA Vera was capable of handling real-time streaming workloads with up to 5.5 times lower latencies than AMD “Turin.” More than 2.5 times faster than Intel Xeon 6 “Granite Rapids.”

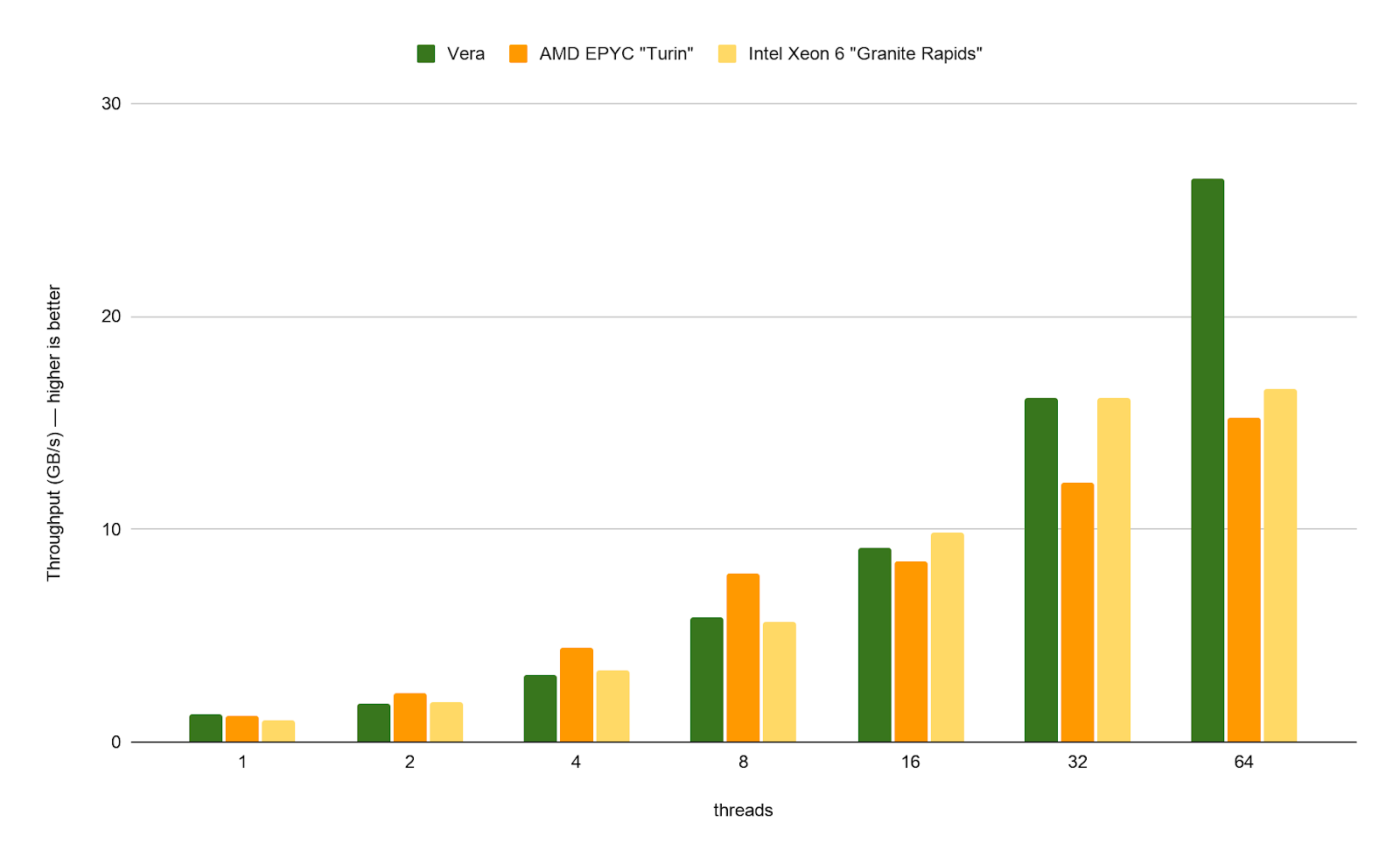

In the full benchmark, we tested three different communication strategies: batch, channel, and ring shuffle. The last of those, ring shuffle, is a novel communication strategy used in Redpanda SQL to implement communication in a cache-efficient way while reducing atomic operations.

This ring shuffle benchmark measures how efficiently producers can send rows to consumers (all pinned to separate cores). We highlighted the results for the "Ring K=2" variation, using two lock-free ring buffers. Vera did well across the parameter space, as well as with the “batch” and “channel” approaches.

The graph above shows cross-core SQL engine shuffle throughput in a benchmark conducted on various configurations, up to 64 cores. Shuffle is a key database primitive used for operations like joins and aggregations. The shuffle implementation is uniquely designed to scale linearly with the number of cores, assuming sufficient cross-core memory bandwidth. Again, Vera shines, scaling up to and beyond 64 cores, whereas other architectures flatten out after 32 cores due to memory bandwidth saturation. In these tests, you can see how Vera performed up to 73% faster than AMD “Turin.”

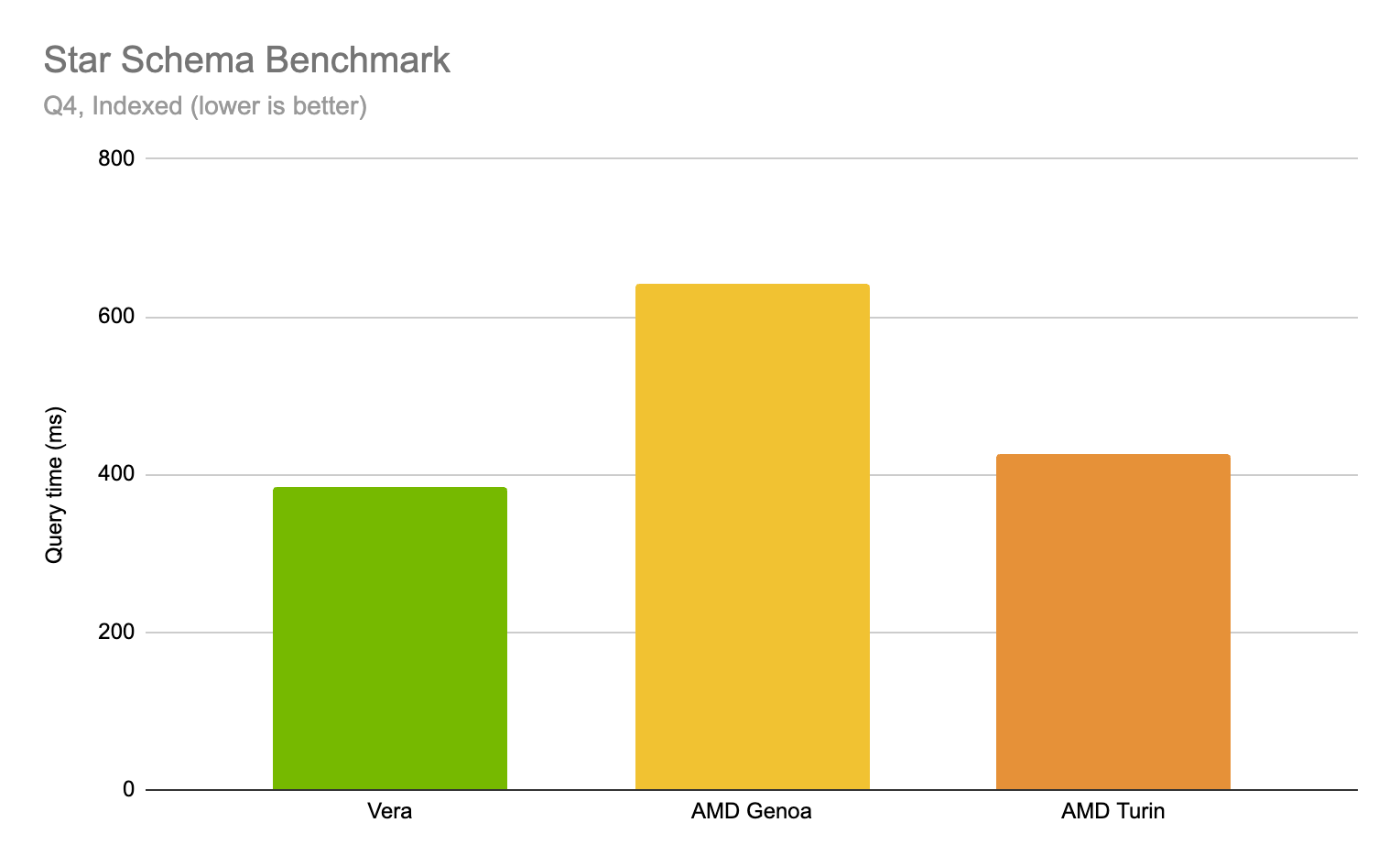

Multi-table joins are a core operation in analytical workloads. They also feature prominently in AI evaluation pipelines where you're joining model outputs against ground truth tables, metadata, and scoring dimensions. The Star Schema Benchmark (SSB) Q4 suite is the most join-heavy subset, joining across all 4 tables in the schema.

The plot above shows the result of running SSB Q4 (indexed) on Redpanda SQL, our distributed SQL engine, across our test systems: Vera finishes the 4-table join 1.1x faster than the AMD EPYC “Turin,” and 1.7x faster than the previous-generation AMD EPYC “Genoa” instance.

Redpanda has a history of delivering mission-critical infrastructure components for both the user-facing and back-end applications that fuel their top-line growth. When Redpanda is deployed on NVIDIA Vera, demanding enterprise customers will get the best of both worlds: rock-solid infrastructure software running on world-class silicon.

NVIDIA Vera represents a perfect hardware platform complement for the Redpanda Agentic Data Plane (ADP)—Redpanda’s latest offering that provides the connectivity, context, and governance for your enterprise agents. It’s built on top of Redpanda Streaming and the other core components of the Redpanda Data platform.

For more information on deploying Redpanda in your organization and how Redpanda and NVIDIA can together fuel your next phase of growth, get in touch!

47% lower latencies using 15% less CPU means better performance for intensive workloads

Official Redpanda themes to add a "paws-itive" flair to your terminal

Subscribe to our VIP (very important panda) mailing list to pounce on the latest blogs, surprise announcements, and community events!

Opt out anytime.