Five principles for governed autonomy with enterprise AI

How we turned opaque agent behavior into governed, provable workflows

How streaming data takes your AI from reactive responses to proactive problem-solving

Not at all. Batch processing is sufficient for historical analysis or reporting where immediate action isn't required. Real-time AI is essential only when the value of an insight decays within seconds or minutes.

It depends on the use case, ranging from single-digit milliseconds for trading to hundreds of milliseconds for chatbots. The practical definition is simply whether the system can act before the opportunity passes.

There is no single "best" model; success depends on the end-to-end system architecture. You need a model that fits your latency budget paired with a streaming data layer that ensures continuous, fresh context.

Real-time analytics visualizes what is happening right now, whereas real-time AI automates the decision of what to do about it. In a nutshell: analytics informs humans, AI takes action.

AI is everywhere right now. And behind the hype, there are certain realms of AI that are genuinely worth exploring. Real-time AI is one of them.

Real-time AI (or "proactive intelligence") is how autonomous vehicles make split-second navigation and safety decisions, and how fraudulent transactions get flagged in milliseconds instead of reported a week later. It’s also how emergency rooms can instantly prioritize critical cases as data comes in.

So if you’re building streaming data applications, you’ll want to learn about real-time AI before everyone else gets too far ahead.

The topic is so important that we even published an O’Reilly report on streaming data for real-time AI that you can download and read at your leisure. It's absolutely packed with practical guidance you can use as you design and ship real-time AI systems.

To help you decide whether it's for you, this post gives you a preview of what's inside. (Even if you don't want the report, you'll still learn a whole lot about real-time AI.)

Let’s get into it.

Real-time AI is an advanced class of systems that perceive, interpret, and act on data as events unfold, rather than after a delay. Unlike traditional AI, which relies on batch processing to analyze data hours or days later, real-time AI closes the gap between insight and action—often down to milliseconds.

In the O'Reilly report, there's one fundamental truth:

The gap between insight and action is shrinking to milliseconds, and organizations that can't keep up are losing ground to those that can.

In brief, traditional AI relies on batch processing: collect data, store it, and analyze it hours or days later. But by the time batch-processed insights arrive, the ideal moment to take action has gone. Markets have moved on, threats have evolved, and golden opportunities have disappeared.

Real-time AI changes the equation by enabling:

Think of real-time AI as a super smart intern or a wildly productive employee (who doesn’t argue with the project lead).

Real-time AI sounds fancy, but it's really just a practical reaction to a practical problem: a lot of data gets old fast.

If your model is working off yesterday's events, you'll still get an answer. It just won't be the right answer at the right moment. When data is stale, the consequences are immediate:

Real-time AI closes that gap by using fresh events as they happen, so the system can respond while the moment to act still exists. Think of real-time AI as a super smart intern or a wildly productive employee (who doesn't argue with the project lead).

The report covers three core messaging patterns that enable real-time systems: point-to-point (queue), publish-subscribe (pub/sub), and data streaming.

Real-time processing can enhance all those patterns, but for real-time AI, you want to use data streaming. Data streaming is designed specifically for high-volume, continuous data processing. It offers three critical advantages for AI models:

Essentially, event streaming provides persistent, replayable logs of everything that has happened so AI models can learn from historical patterns while responding to current events.

Think of a live customer service chat where the AI responds in real time using internal company content and the customer's current inquiry, or gives the human agent immediate, context-aware assistance based on prior successful conversations for similar inquiries. Human agents can also replay the entire conversation later for training purposes, since it's all conveniently logged.

A few popular technologies that enable data streaming for real-time AI include Redpanda, Apache Kafka®, and Apache Pulsar, alongside cloud-native options like Amazon Kinesis and Azure Event Hubs.

So, how do you put together a scalable architecture for real-time AI? Well, to cite the report,

"Building a scalable, real-time AI system is not a single architectural choice; rather, it comprises layered optimizations that compound. Each one of these categories is a lever, and there are trade-offs between latency, persistence, computational complexity, and scalability that are deeply interconnected."

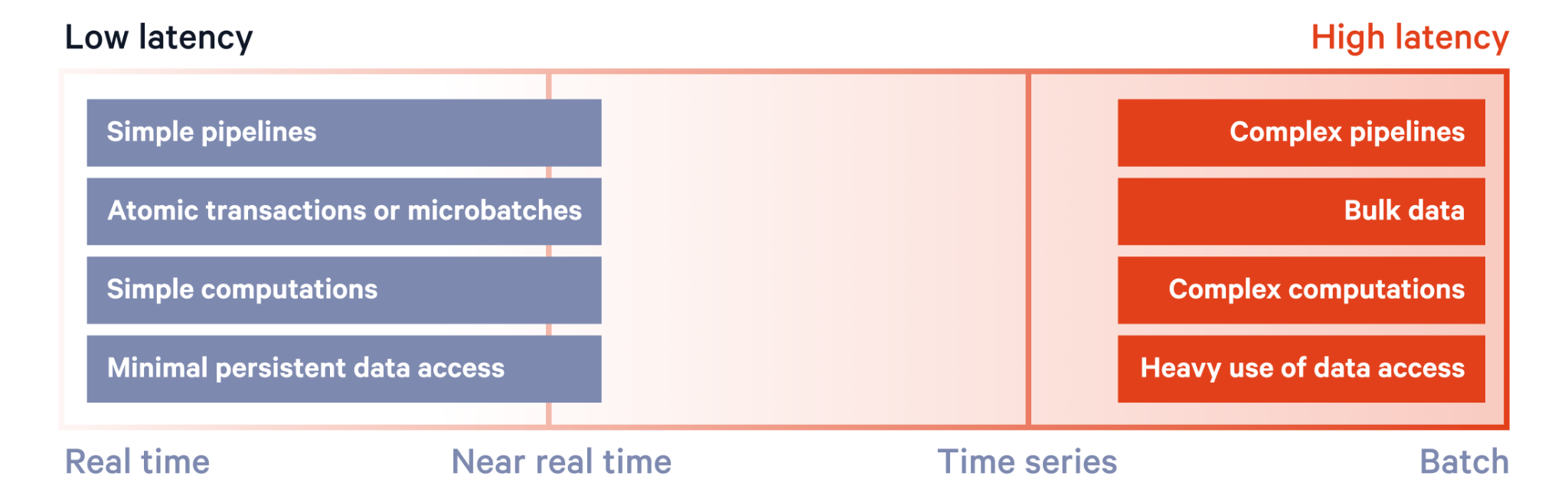

For example, in the delicate case of latency—the time between data generation and consumption—it depends on factors like pipeline complexity, data volume, and computational load.

Simply put, the more complex the pipeline, the higher the latency. (This is one reason AI-first companies, like poolside and Deepomatic, are choosing Redpanda to simplify their pipelines and keep latency ultra low.)

To really dig into the trade-offs in each layer and learn which architectural choices suit your use case, go ahead and download the full report.

{{featured-resource}}

Real-time AI looks simple on a slide: events go in, model thinks, action comes out. In reality, it's a system with a tight latency budget and a lot of moving parts.

For one, you have to keep the pipeline predictable under load. It's not enough for average latency to look good; you need the "slow path" to behave, too. You must be honest about the computational cost of every step between the data and the decision.

Beyond latency, you face the operational realities of distributed systems:

Aside from architectural choices and their implementations, the report also covers a rarely-discussed side of AI: where to run it.

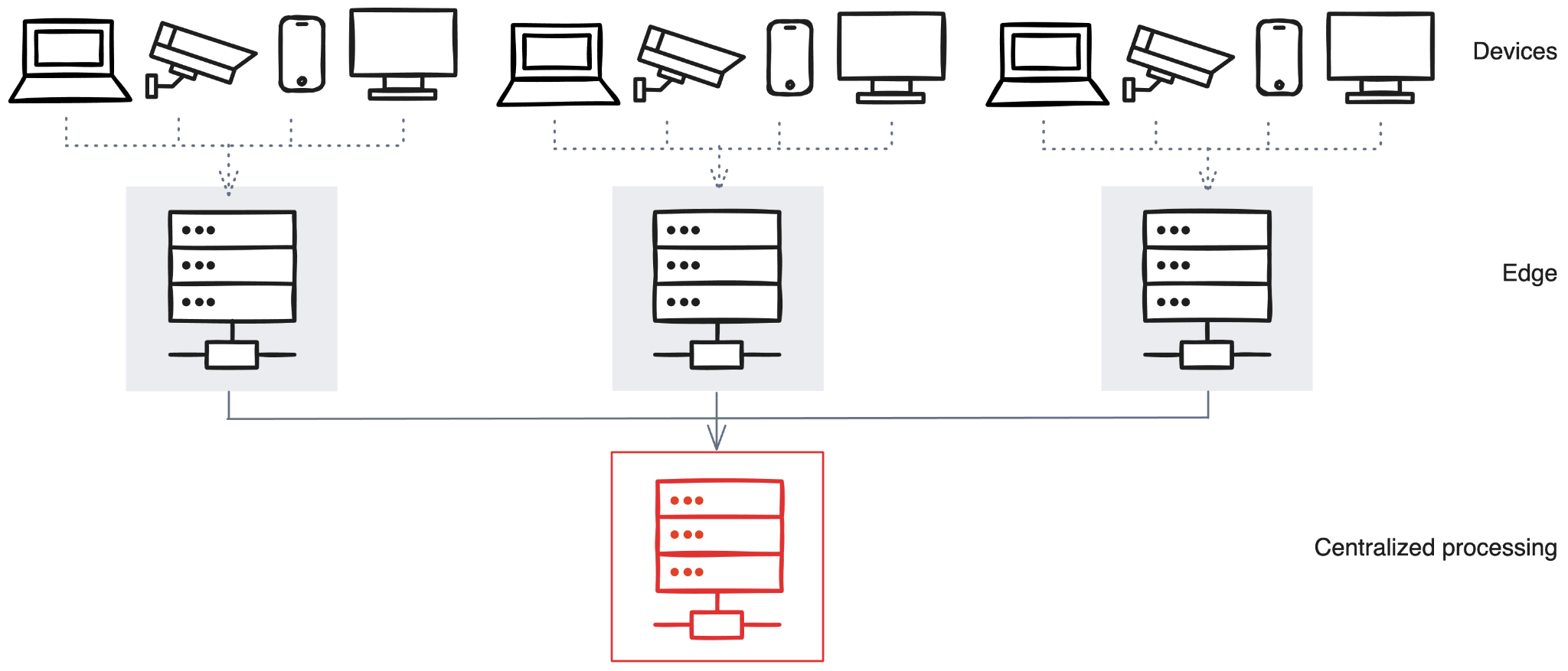

Running AI is all about location, location, location. All things being equal, the closer the AI processing happens to the data source, the lower the latency. This is because remote processing can introduce delays due to network communication and data transfer times. Also, the more intermediaries something has to pass through, the more latency it experiences (like speed bumps on the road).

Typically, AI will run in one of three different locations: device, edge, and centralized.

Here’s a quick introduction:

You can also opt for a hybrid approach to get the best of all worlds. As a real-world examples, the New York Stock Exchange (NYSE) handles over one trillion records daily with sub-100ms latency by strategically combining edge and centralized processing.

Implementing real-time AI in trading can significantly enhance decision making, profitability, and risk management by reducing latency in trade execution.

The financial sector exemplifies the ultimate latency-sensitive application, but there are plenty more industries already embracing real-time AI. The report focuses on the five most popular domains:

In each of these, real-time AI shortens the time between insight and action, which in turn compresses the time between opportunity and impact. (And that can only mean good things for their bottom line.)

Just imagine what it could achieve in your field?

Real-time is already leaving a golden footprint in some of the world’s biggest businesses, but how’s the next frontier of applications shaping up? Well, the report highlights two areas in particular:

With the possibilities of AI swiftly transitioning from unimaginable to obvious, it’s wise to learn everything you can to meet AI where it’s at now and help shape the future of where it’ll go next. Remember, this post was just a taster. If you really want to dig into the concepts, architectures, and implementation strategies, go ahead and download the free ebook! (Last prompt, promise.)

You can also check out the resources below to keep the momentum going. Go forth and learn more.

[Website] How to build real-time AI the easy way

[Blog] Top AI agent use cases across industries

[Blog] What is agentic AI? An introduction to autonomous agents

[Docs] Retrieval-Augmented Generation (RAG) | Cookbooks

[YouTube] Bringing RAG to device with Redpanda Connect using LLama

[Infographic] Why event-driven data is the missing link for agentic AI | Redpanda

[On-demand] Intro to Agentic AI | Tech Talk on building private enterprise AI agents

How we turned opaque agent behavior into governed, provable workflows

How we revamped our Redleader agent to enable governed, multi-agent AI for the enterprise

How a governed data control plane ensures trust and accountability

Subscribe to our VIP (very important panda) mailing list to pounce on the latest blogs, surprise announcements, and community events!

Opt out anytime.